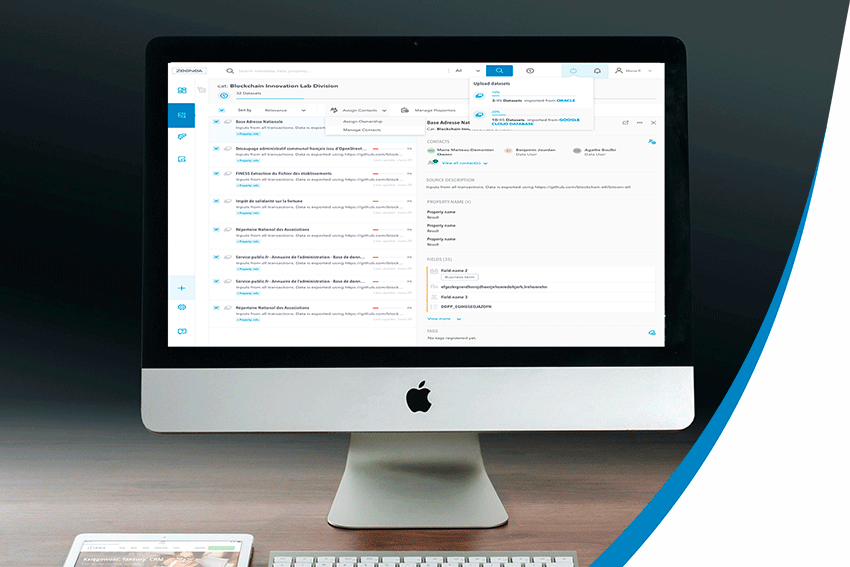

Data Catalog

Topics on data are still considered to be an extremely technical domain. However, data innovation is only possible if it is shared and understood by the large majority of people within your company.

And this with a data catalog!

In 2017, Gartner declared data catalogs as “the new black in data management and analytics”. Now, they have become a MUST-HAVE solution for data leaders!

In this section, discover all you need to know about the latest data cataloging news, features, and trends.

U

In today's era of expansive data volumes, AI stands at the forefront of revolutionizing how organizations manage ...

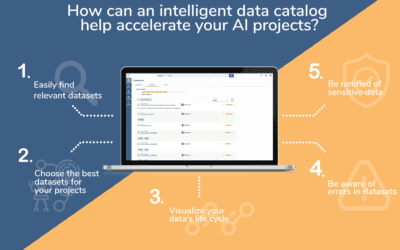

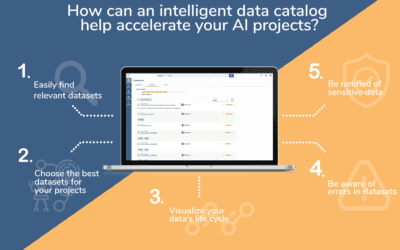

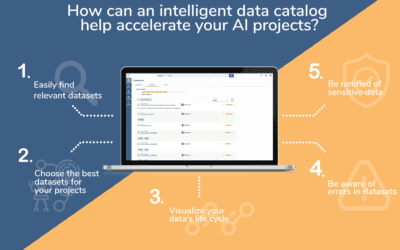

The Role of Data Catalogs in Accelerating AI Initiatives

In today's data-driven landscape, organizations increasingly rely on AI to gain insights, drive innovation, and ...

![[SERIES] Data Shopping Part 2 – The Zeenea Data Shopping Experience](https://zeenea.com/wp-content/uploads/2024/06/zeenea-data-shopping-experience-blog-image-400x250.png)

[SERIES] Data Shopping Part 2 – The Zeenea Data Shopping Experience

Just as shopping for goods online involves selecting items, adding them to a cart, and choosing delivery and ...

![[SERIES] Building a Marketplace for Data Mesh Part 3: Feeding the Marketplace via domain-specific data catalogs](https://zeenea.com/wp-content/uploads/2024/06/iStock-1418478531-400x250.jpg)

[SERIES] Building a Marketplace for Data Mesh Part 3: Feeding the Marketplace via domain-specific data catalogs

Over the past decade, data catalogs have emerged as important pillars in the landscape of data-driven initiatives. ...

![[SERIES] Building a Marketplace for Data Mesh Part 2: Setting up an enterprise-level marketplace](https://zeenea.com/wp-content/uploads/2024/06/iStock-1513818710-400x250.jpg)

[SERIES] Building a Marketplace for Data Mesh Part 2: Setting up an enterprise-level marketplace

Over the past decade, data catalogs have emerged as important pillars in the landscape of data-driven initiatives. ...

![[SERIES] Building a Marketplace for data mesh Part 1: Facilitating data product consumption through metadata](https://zeenea.com/wp-content/uploads/2024/05/iStock-1485944683-400x250.jpg)

[SERIES] Building a Marketplace for data mesh Part 1: Facilitating data product consumption through metadata

Over the past decade, data catalogs have emerged as important pillars in the landscape of data-driven initiatives. ...

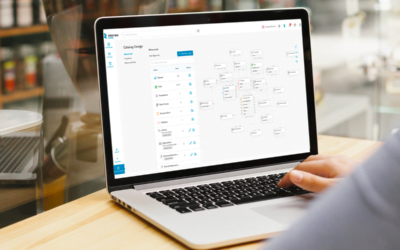

Why is a Data Catalog essential for Data Product Management?

Data Mesh is one of the hottest topics in the data space. In fact, according to a recent BARC Survey, 54% of ...

5 essential Zeenea features for a five-star Data Stewardship Program

You have data - and lots of it. However, it is messy, incomplete, and scattered into several different platforms, ...

The top 5 benefits of data lineage

Do you have the ambition to turn your organization into a data-driven enterprise? You cannot escape the need to ...

What is Data Sharing: benefits, challenges, and best practices

In the ever-evolving data and digital landscape, data sharing has become essential to drive business value. ...

5 Reasons to Enhance Your Data Catalog with an Enterprise Data Marketplace (EDM)

Over the past decade, Data Catalogs have emerged as important pillars in the landscape of data-driven initiatives. ...

![[Press Release] Zeenea launches its Enterprise Data Marketplace, revolutionizing Data Product Management](https://zeenea.com/wp-content/uploads/2024/01/launch-enterprise-data-marketplace-400x250.png)

[Press Release] Zeenea launches its Enterprise Data Marketplace, revolutionizing Data Product Management

Paris, January 23, 2024 - Zeenea, a leader in metadata management and data discovery solutions, today ignites a ...

Zeenea Product Recap: A look back at 2023

2023 was another big year for Zeenea. With more than 50 releases and updates to our platform, these past 12 months ...

Key takeaways from the Zeenea Exchange 2023: Unlocking the power of the enterprise Data Catalog

Each year, Zeenea organizes exclusive events that bring together our clients and partners from various ...

The Guide to Understanding the Difference Between a Business Glossary, a Data Catalog, and a Data Dictionary

You've put data at the center of your company's business strategy, but the amount of data you have to handle is ...

Enabling Data Literacy: 5 Ways a Data Catalog is Key

In today's data-driven world, organizations from all industries are collecting vast amounts of data from various ...

Don’t let these 4 Data Nightmares scare you – Zeenea is here to help

You wake up with your heart pounding. Your feet are trembling - Just moments ago you were being chased by ...

The state of data access in data-driven enterprises – BARC Data Culture Survey 23

Zeenea is a proud sponsor of BARC’s Data Culture Survey 23. Get your free copy here.In last year’s BARC Data ...

How does a Data Catalog reinforce the 4 fundamental principles of Data Mesh?

Introduction: what is data mesh?

As companies are becoming more aware of the importance of their data, they are ...

The traps to avoid for a successful data catalog project – Technical integration

Metadata management is an important component in a data management project and it requires more than just the data ...

The traps to avoid for a successful data catalog project – Project Leadership

Metadata management is an important component in a data management project and it requires more than just the data ...

The traps to avoid for a successful data catalog project – Internal sponsorship

Metadata management is an important component in a data management project and it requires more than just the data ...

The traps to avoid for a successful data catalog project – Data Culture

Metadata management is an important component in a data management project and it requires more than just the data ...

How does a data catalog help companies implement successful Data Stewardship programs?

By implementing a data stewardship program in your organization, you ensure not only the quality of your data but ...

Why Zeenea chose a Privacy by Design approach for its data catalog?

Since the beginning of the 21st century, we’ve been experiencing a true digital revolution. The world is ...

The 5 product values that strengthen Zeenea’s team cohesion & customer experience

To remain competitive, organizations must make decisions quickly, as the slightest mistake can lead to a waste of ...

Zeenea is now SOC 2 Type II compliant

Faced with the increase in cyber threats, organizations endure a slew of customer requests for security assurance. ...

Interview with Ruben Marco Ganzaroli – CDO at Autostrade per l’Italia

We are pleased to have been selected by Autostrade per l'Italia - a European leader among concessionaires for the ...

What makes a data catalog “smart”? #5 – User Experience

A data catalog harnesses enormous amounts of very diverse information - and its volume will grow ...

What makes a data catalog “smart”? #4 – The search engine

A data catalog harnesses enormous amounts of very diverse information - and its volume will grow ...

What makes a data catalog “smart”? #3 – Metadata Management

A data catalog harnesses enormous amounts of very diverse information - and its volume will grow ...

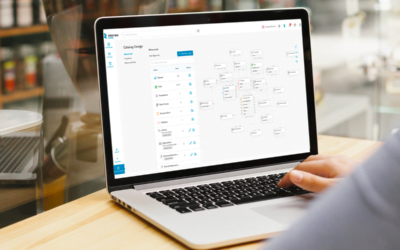

What makes a data catalog “smart”? #2 – The Data Inventory

A data catalog harnesses enormous amounts of very diverse information - and its volume will grow ...

What makes a data catalog “smart”? #1 – Metamodeling

A data catalog harnesses enormous amounts of very diverse information - and its volume will grow ...

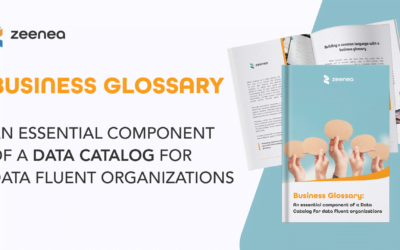

The business glossary: a productivity lever for a data catalog

An organization needs to handle vast volumes of technical assets that often carry a lot of duplicate information ...

Zeenea voted best data catalog on the market for data democratization – BARC

On October 20, BARC hosted an interactive webinar with three historical data catalog vendors - Alation, ...

How to build an efficient permission management system for a data catalog

An organization’s data catalog enhances all available data assets by relying on two types of information - on the ...

Exploiting the value of Data Lineage in the organization: A user-centric approach

In our previous article, we broke down Data Lineage by presenting the different lineage typologies (physical ...

Breaking down Data Lineage: typologies and granularity

As a concept, Data Lineage seems universal: whatever the sector of activity, any stakeholder in a data-driven ...

How pivoting to a SaaS model allowed 320 production releases in 6 months

After starting out as an on-premise data catalog solution, Zeenea made the decision to switch to a fully SaaS ...

What is Data Lineage?

In order to access and exploit your data assets on a regular basis, your organization will need to know ...

The Data catalog: an essential solution for metadata management

Your company produces or uses more and more data? To better classify, manage, and give meaning to your data, ...

Data Curation: essential for enhancing your data assets

Having large volumes of data isn’t enough: it’s what you make of it that counts! To make the most out of your ...

The 7 lies of Data Catalog Providers – #7 – A Data Catalog is complex…but isn’t complicated!

The Data Catalog market has developed rapidly, and it is now deemed essential when deploying a data-driven ...

The 7 lies of Data Catalog Providers – #6 A Data Catalog must rely on automation!

The Data Catalog market has developed rapidly, and it is now deemed essential when deploying a data-driven ...

Pricing your Enterprise Data Catalog: What should it really cost?

For the last couple of years, the Zeenea international sales team has been in contact with prospective clients the ...

The 7 lies of Data Catalog Providers – #5 A Data Catalog is not a Business Modeling Solution!

The Data Catalog market has developed rapidly, and it is now deemed essential when deploying a data-driven ...

The 7 lies of Data Catalog Providers – #4 A Data Catalog is not a Query Solution!

The Data Catalog market has developed rapidly, and it is now deemed essential when deploying a data-driven ...

What is Data Mesh?

In this new era of information, new terms are used in organizations working with data: Data Management Platform, ...

The 7 lies of Data Catalog Providers – #3 A Data Catalog is not a Compliance Solution!

The Data Catalog market has developed rapidly, and it is now deemed essential when deploying a data-driven ...

The 7 lies of Data Catalog Providers – #2 A Data Catalog is NOT a Data Quality Management Solution

The Data Catalog market has developed rapidly, and it is now deemed essential when deploying a data-driven ...

The 7 lies of Data Catalog Providers – #1 A Data Catalog is NOT a Data Governance Solution

The Data Catalog market has developed rapidly, and it is now deemed essential when deploying a data-driven ...

Data mapping, the key to regulatory compliance

Regardless of the business sector, data management is a key strategic asset for companies. This information is key ...

IoT in manufacturing: why your enterprise needs a data catalog

Digital transformation has become a priority in organizations' business strategies and manufacturing industries ...

Machine Learning Data Catalogs: good but not good enough!

How can you benefit from a Machine Learning Data Catalog?

You can use Machine Learning Data Catalogs (MLDCs) to ...

What is a knowledge graph and how can it empower data catalog capabilities?

Knowledge graphs have been interacting with us for quite some time. Whether it be through personalized shopping ...

A smart data catalog, a must-have for data leaders

The term "smart data catalog" has become a buzzword over the past few months. However, when referring to something ...

Data science: accelerate your data lake initiatives with metadata

Data lakes offer an unlimited storage for data and present lots of potential benefits for data scientists in the ...

DataOps: How data catalogs enable better data discovery in a Big Data project

In today’s world, Big Data environments are more and more complex and difficult to manage. We believe that Big ...

How you’re going to fail your data catalog project (or not…)

There are many solutions on the data catalog market that offer an overview of all enterprise data all thanks to ...

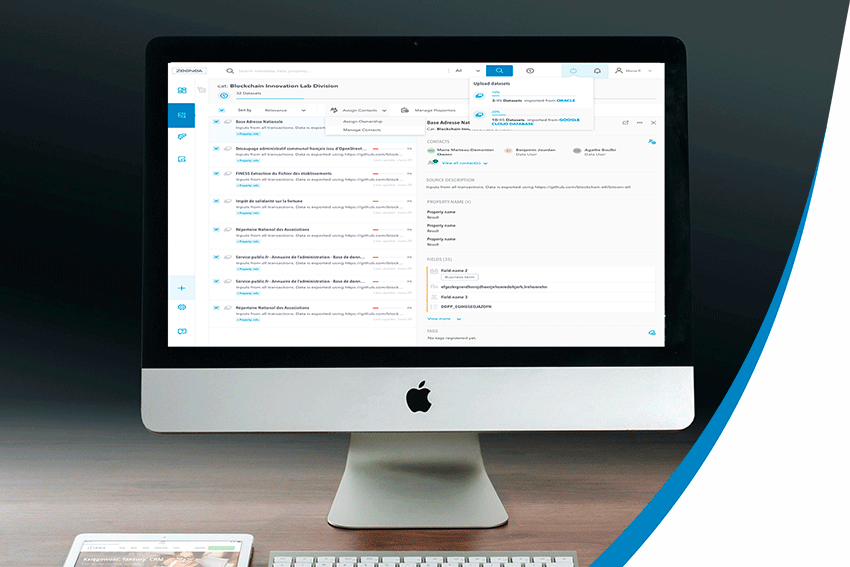

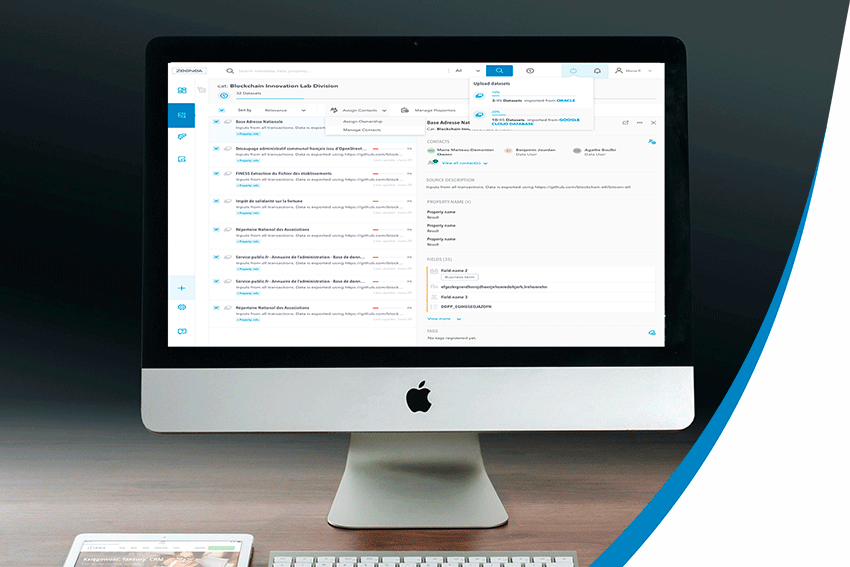

How does Zeenea Data Catalog empower your data teams?

Data has become one of the main drivers for innovation for many sectors.And as data continues to rapidly ...

How to evaluate your future Data Catalog?

The explosion of data sources in organizations, the heterogeneity of data or even the new demands related to data ...

What is the difference between a data dictionary and a business glossary?

In metadata management, we often talk about data dictionaries and business glossaries. Although they might ...

Data Revolutions: Towards a Business Vision of Data

The use of massive data by the internet giants in the 2000s was a wake-up call for enterprises: Big Data is a ...

What is a Chief Data Officer

According to a Gartner study presented at the Data & Analytics conference in London 2019, 90% of large ...

How Artificial Intelligence enhances data catalogs

Can machines think? We are talking about artificial intelligence, “the biggest myth of our time”!A simple ...

Data catalog: a self-service data platform

A data catalog is a portal that brings metadata on collected data sets together by the enterprise. This ...

What are the different types of metadata?

Dealing with large volumes of data is essential to any organization's success. But knowing what kind of data it ...

What is a Data Steward?

Data stewards are the first point of reference for data and serve as an entry point for data access. They have ...

What is a Data Catalog?

It is no secret that the enormous volumes of information that companies generate require the right tools ...

Data mapping: The challenges in an organization

The arrival of Big Data did not simplify how enterprises work with data. The volume, the variety, and the ...

How to map your information system’s data?

Data lineage is defined as the life cycle of data: its origin, movements, and impacts over time. It offers ...

Data lineage in a big data environment

Data lineage is defined as a type of data life cycle. It is a detailed representation of any data over ...

No results found.

U

Harnessing the Power of AI in Data Cataloging

In today's era of expansive data volumes, AI stands at the forefront of revolutionizing how organizations manage ...

The Role of Data Catalogs in Accelerating AI Initiatives

In today's data-driven landscape, organizations increasingly rely on AI to gain insights, drive innovation, and ...

![[SERIES] Data Shopping Part 2 – The Zeenea Data Shopping Experience](https://zeenea.com/wp-content/uploads/2024/06/zeenea-data-shopping-experience-blog-image-400x250.png)

[SERIES] Data Shopping Part 2 – The Zeenea Data Shopping Experience

Just as shopping for goods online involves selecting items, adding them to a cart, and choosing delivery and ...

![[SERIES] Building a Marketplace for Data Mesh Part 3: Feeding the Marketplace via domain-specific data catalogs](https://zeenea.com/wp-content/uploads/2024/06/iStock-1418478531-400x250.jpg)

[SERIES] Building a Marketplace for Data Mesh Part 3: Feeding the Marketplace via domain-specific data catalogs

Over the past decade, data catalogs have emerged as important pillars in the landscape of data-driven initiatives. ...

![[SERIES] Building a Marketplace for Data Mesh Part 2: Setting up an enterprise-level marketplace](https://zeenea.com/wp-content/uploads/2024/06/iStock-1513818710-400x250.jpg)

[SERIES] Building a Marketplace for Data Mesh Part 2: Setting up an enterprise-level marketplace

Over the past decade, data catalogs have emerged as important pillars in the landscape of data-driven initiatives. ...

![[SERIES] Building a Marketplace for data mesh Part 1: Facilitating data product consumption through metadata](https://zeenea.com/wp-content/uploads/2024/05/iStock-1485944683-400x250.jpg)

[SERIES] Building a Marketplace for data mesh Part 1: Facilitating data product consumption through metadata

Over the past decade, data catalogs have emerged as important pillars in the landscape of data-driven initiatives. ...

Why is a Data Catalog essential for Data Product Management?

Data Mesh is one of the hottest topics in the data space. In fact, according to a recent BARC Survey, 54% of ...

5 essential Zeenea features for a five-star Data Stewardship Program

You have data - and lots of it. However, it is messy, incomplete, and scattered into several different platforms, ...

The top 5 benefits of data lineage

Do you have the ambition to turn your organization into a data-driven enterprise? You cannot escape the need to ...

What is Data Sharing: benefits, challenges, and best practices

In the ever-evolving data and digital landscape, data sharing has become essential to drive business value. ...

5 Reasons to Enhance Your Data Catalog with an Enterprise Data Marketplace (EDM)

Over the past decade, Data Catalogs have emerged as important pillars in the landscape of data-driven initiatives. ...

![[Press Release] Zeenea launches its Enterprise Data Marketplace, revolutionizing Data Product Management](https://zeenea.com/wp-content/uploads/2024/01/launch-enterprise-data-marketplace-400x250.png)

[Press Release] Zeenea launches its Enterprise Data Marketplace, revolutionizing Data Product Management

Paris, January 23, 2024 - Zeenea, a leader in metadata management and data discovery solutions, today ignites a ...

Zeenea Product Recap: A look back at 2023

2023 was another big year for Zeenea. With more than 50 releases and updates to our platform, these past 12 months ...

Key takeaways from the Zeenea Exchange 2023: Unlocking the power of the enterprise Data Catalog

Each year, Zeenea organizes exclusive events that bring together our clients and partners from various ...

The Guide to Understanding the Difference Between a Business Glossary, a Data Catalog, and a Data Dictionary

You've put data at the center of your company's business strategy, but the amount of data you have to handle is ...

Enabling Data Literacy: 5 Ways a Data Catalog is Key

In today's data-driven world, organizations from all industries are collecting vast amounts of data from various ...

Don’t let these 4 Data Nightmares scare you – Zeenea is here to help

You wake up with your heart pounding. Your feet are trembling - Just moments ago you were being chased by ...

The state of data access in data-driven enterprises – BARC Data Culture Survey 23

Zeenea is a proud sponsor of BARC’s Data Culture Survey 23. Get your free copy here.In last year’s BARC Data ...

How does a Data Catalog reinforce the 4 fundamental principles of Data Mesh?

Introduction: what is data mesh?

As companies are becoming more aware of the importance of their data, they are ...

The traps to avoid for a successful data catalog project – Technical integration

Metadata management is an important component in a data management project and it requires more than just the data ...

The traps to avoid for a successful data catalog project – Project Leadership

Metadata management is an important component in a data management project and it requires more than just the data ...

The traps to avoid for a successful data catalog project – Internal sponsorship

Metadata management is an important component in a data management project and it requires more than just the data ...

The traps to avoid for a successful data catalog project – Data Culture

Metadata management is an important component in a data management project and it requires more than just the data ...

How does a data catalog help companies implement successful Data Stewardship programs?

By implementing a data stewardship program in your organization, you ensure not only the quality of your data but ...

Why Zeenea chose a Privacy by Design approach for its data catalog?

Since the beginning of the 21st century, we’ve been experiencing a true digital revolution. The world is ...

The 5 product values that strengthen Zeenea’s team cohesion & customer experience

To remain competitive, organizations must make decisions quickly, as the slightest mistake can lead to a waste of ...

Zeenea is now SOC 2 Type II compliant

Faced with the increase in cyber threats, organizations endure a slew of customer requests for security assurance. ...

Interview with Ruben Marco Ganzaroli – CDO at Autostrade per l’Italia

We are pleased to have been selected by Autostrade per l'Italia - a European leader among concessionaires for the ...

What makes a data catalog “smart”? #5 – User Experience

A data catalog harnesses enormous amounts of very diverse information - and its volume will grow ...

What makes a data catalog “smart”? #4 – The search engine

A data catalog harnesses enormous amounts of very diverse information - and its volume will grow ...

What makes a data catalog “smart”? #3 – Metadata Management

A data catalog harnesses enormous amounts of very diverse information - and its volume will grow ...

What makes a data catalog “smart”? #2 – The Data Inventory

A data catalog harnesses enormous amounts of very diverse information - and its volume will grow ...

What makes a data catalog “smart”? #1 – Metamodeling

A data catalog harnesses enormous amounts of very diverse information - and its volume will grow ...

The business glossary: a productivity lever for a data catalog

An organization needs to handle vast volumes of technical assets that often carry a lot of duplicate information ...

Zeenea voted best data catalog on the market for data democratization – BARC

On October 20, BARC hosted an interactive webinar with three historical data catalog vendors - Alation, ...

How to build an efficient permission management system for a data catalog

An organization’s data catalog enhances all available data assets by relying on two types of information - on the ...

Exploiting the value of Data Lineage in the organization: A user-centric approach

In our previous article, we broke down Data Lineage by presenting the different lineage typologies (physical ...

Breaking down Data Lineage: typologies and granularity

As a concept, Data Lineage seems universal: whatever the sector of activity, any stakeholder in a data-driven ...

How pivoting to a SaaS model allowed 320 production releases in 6 months

After starting out as an on-premise data catalog solution, Zeenea made the decision to switch to a fully SaaS ...

What is Data Lineage?

In order to access and exploit your data assets on a regular basis, your organization will need to know ...

The Data catalog: an essential solution for metadata management

Your company produces or uses more and more data? To better classify, manage, and give meaning to your data, ...

Data Curation: essential for enhancing your data assets

Having large volumes of data isn’t enough: it’s what you make of it that counts! To make the most out of your ...

The 7 lies of Data Catalog Providers – #7 – A Data Catalog is complex…but isn’t complicated!

The Data Catalog market has developed rapidly, and it is now deemed essential when deploying a data-driven ...

The 7 lies of Data Catalog Providers – #6 A Data Catalog must rely on automation!

The Data Catalog market has developed rapidly, and it is now deemed essential when deploying a data-driven ...

Pricing your Enterprise Data Catalog: What should it really cost?

For the last couple of years, the Zeenea international sales team has been in contact with prospective clients the ...

The 7 lies of Data Catalog Providers – #5 A Data Catalog is not a Business Modeling Solution!

The Data Catalog market has developed rapidly, and it is now deemed essential when deploying a data-driven ...

The 7 lies of Data Catalog Providers – #4 A Data Catalog is not a Query Solution!

The Data Catalog market has developed rapidly, and it is now deemed essential when deploying a data-driven ...

What is Data Mesh?

In this new era of information, new terms are used in organizations working with data: Data Management Platform, ...

The 7 lies of Data Catalog Providers – #3 A Data Catalog is not a Compliance Solution!

The Data Catalog market has developed rapidly, and it is now deemed essential when deploying a data-driven ...

The 7 lies of Data Catalog Providers – #2 A Data Catalog is NOT a Data Quality Management Solution

The Data Catalog market has developed rapidly, and it is now deemed essential when deploying a data-driven ...

The 7 lies of Data Catalog Providers – #1 A Data Catalog is NOT a Data Governance Solution

The Data Catalog market has developed rapidly, and it is now deemed essential when deploying a data-driven ...

Data mapping, the key to regulatory compliance

Regardless of the business sector, data management is a key strategic asset for companies. This information is key ...

IoT in manufacturing: why your enterprise needs a data catalog

Digital transformation has become a priority in organizations' business strategies and manufacturing industries ...

Machine Learning Data Catalogs: good but not good enough!

How can you benefit from a Machine Learning Data Catalog?

You can use Machine Learning Data Catalogs (MLDCs) to ...

What is a knowledge graph and how can it empower data catalog capabilities?

Knowledge graphs have been interacting with us for quite some time. Whether it be through personalized shopping ...

A smart data catalog, a must-have for data leaders

The term "smart data catalog" has become a buzzword over the past few months. However, when referring to something ...

Data science: accelerate your data lake initiatives with metadata

Data lakes offer an unlimited storage for data and present lots of potential benefits for data scientists in the ...

DataOps: How data catalogs enable better data discovery in a Big Data project

In today’s world, Big Data environments are more and more complex and difficult to manage. We believe that Big ...

How you’re going to fail your data catalog project (or not…)

There are many solutions on the data catalog market that offer an overview of all enterprise data all thanks to ...

How does Zeenea Data Catalog empower your data teams?

Data has become one of the main drivers for innovation for many sectors.And as data continues to rapidly ...

How to evaluate your future Data Catalog?

The explosion of data sources in organizations, the heterogeneity of data or even the new demands related to data ...

What is the difference between a data dictionary and a business glossary?

In metadata management, we often talk about data dictionaries and business glossaries. Although they might ...

Data Revolutions: Towards a Business Vision of Data

The use of massive data by the internet giants in the 2000s was a wake-up call for enterprises: Big Data is a ...

What is a Chief Data Officer

According to a Gartner study presented at the Data & Analytics conference in London 2019, 90% of large ...

How Artificial Intelligence enhances data catalogs

Can machines think? We are talking about artificial intelligence, “the biggest myth of our time”!A simple ...

Data catalog: a self-service data platform

A data catalog is a portal that brings metadata on collected data sets together by the enterprise. This ...

What are the different types of metadata?

Dealing with large volumes of data is essential to any organization's success. But knowing what kind of data it ...

What is a Data Steward?

Data stewards are the first point of reference for data and serve as an entry point for data access. They have ...

What is a Data Catalog?

It is no secret that the enormous volumes of information that companies generate require the right tools ...

Data mapping: The challenges in an organization

The arrival of Big Data did not simplify how enterprises work with data. The volume, the variety, and the ...

How to map your information system’s data?

Data lineage is defined as the life cycle of data: its origin, movements, and impacts over time. It offers ...

Data lineage in a big data environment

Data lineage is defined as a type of data life cycle. It is a detailed representation of any data over ...

No results found.

U

Harnessing the Power of AI in Data Cataloging

In today's era of expansive data volumes, AI stands at the forefront of revolutionizing how organizations manage ...

The Role of Data Catalogs in Accelerating AI Initiatives

In today's data-driven landscape, organizations increasingly rely on AI to gain insights, drive innovation, and ...

![[SERIES] Data Shopping Part 2 – The Zeenea Data Shopping Experience](https://zeenea.com/wp-content/uploads/2024/06/zeenea-data-shopping-experience-blog-image-400x250.png)

[SERIES] Data Shopping Part 2 – The Zeenea Data Shopping Experience

Just as shopping for goods online involves selecting items, adding them to a cart, and choosing delivery and ...

![[SERIES] Building a Marketplace for Data Mesh Part 3: Feeding the Marketplace via domain-specific data catalogs](https://zeenea.com/wp-content/uploads/2024/06/iStock-1418478531-400x250.jpg)

[SERIES] Building a Marketplace for Data Mesh Part 3: Feeding the Marketplace via domain-specific data catalogs

Over the past decade, data catalogs have emerged as important pillars in the landscape of data-driven initiatives. ...

![[SERIES] Building a Marketplace for Data Mesh Part 2: Setting up an enterprise-level marketplace](https://zeenea.com/wp-content/uploads/2024/06/iStock-1513818710-400x250.jpg)

[SERIES] Building a Marketplace for Data Mesh Part 2: Setting up an enterprise-level marketplace

Over the past decade, data catalogs have emerged as important pillars in the landscape of data-driven initiatives. ...

![[SERIES] Building a Marketplace for data mesh Part 1: Facilitating data product consumption through metadata](https://zeenea.com/wp-content/uploads/2024/05/iStock-1485944683-400x250.jpg)

[SERIES] Building a Marketplace for data mesh Part 1: Facilitating data product consumption through metadata

Over the past decade, data catalogs have emerged as important pillars in the landscape of data-driven initiatives. ...

Why is a Data Catalog essential for Data Product Management?

Data Mesh is one of the hottest topics in the data space. In fact, according to a recent BARC Survey, 54% of ...

5 essential Zeenea features for a five-star Data Stewardship Program

You have data - and lots of it. However, it is messy, incomplete, and scattered into several different platforms, ...

The top 5 benefits of data lineage

Do you have the ambition to turn your organization into a data-driven enterprise? You cannot escape the need to ...

What is Data Sharing: benefits, challenges, and best practices

In the ever-evolving data and digital landscape, data sharing has become essential to drive business value. ...

5 Reasons to Enhance Your Data Catalog with an Enterprise Data Marketplace (EDM)

Over the past decade, Data Catalogs have emerged as important pillars in the landscape of data-driven initiatives. ...

![[Press Release] Zeenea launches its Enterprise Data Marketplace, revolutionizing Data Product Management](https://zeenea.com/wp-content/uploads/2024/01/launch-enterprise-data-marketplace-400x250.png)

[Press Release] Zeenea launches its Enterprise Data Marketplace, revolutionizing Data Product Management

Paris, January 23, 2024 - Zeenea, a leader in metadata management and data discovery solutions, today ignites a ...

Zeenea Product Recap: A look back at 2023

2023 was another big year for Zeenea. With more than 50 releases and updates to our platform, these past 12 months ...

Key takeaways from the Zeenea Exchange 2023: Unlocking the power of the enterprise Data Catalog

Each year, Zeenea organizes exclusive events that bring together our clients and partners from various ...

The Guide to Understanding the Difference Between a Business Glossary, a Data Catalog, and a Data Dictionary

You've put data at the center of your company's business strategy, but the amount of data you have to handle is ...

Enabling Data Literacy: 5 Ways a Data Catalog is Key

In today's data-driven world, organizations from all industries are collecting vast amounts of data from various ...

Don’t let these 4 Data Nightmares scare you – Zeenea is here to help

You wake up with your heart pounding. Your feet are trembling - Just moments ago you were being chased by ...

The state of data access in data-driven enterprises – BARC Data Culture Survey 23

Zeenea is a proud sponsor of BARC’s Data Culture Survey 23. Get your free copy here.In last year’s BARC Data ...

How does a Data Catalog reinforce the 4 fundamental principles of Data Mesh?

Introduction: what is data mesh?

As companies are becoming more aware of the importance of their data, they are ...

The traps to avoid for a successful data catalog project – Technical integration

Metadata management is an important component in a data management project and it requires more than just the data ...

The traps to avoid for a successful data catalog project – Project Leadership

Metadata management is an important component in a data management project and it requires more than just the data ...

The traps to avoid for a successful data catalog project – Internal sponsorship

Metadata management is an important component in a data management project and it requires more than just the data ...

The traps to avoid for a successful data catalog project – Data Culture

Metadata management is an important component in a data management project and it requires more than just the data ...

How does a data catalog help companies implement successful Data Stewardship programs?

By implementing a data stewardship program in your organization, you ensure not only the quality of your data but ...

Why Zeenea chose a Privacy by Design approach for its data catalog?

Since the beginning of the 21st century, we’ve been experiencing a true digital revolution. The world is ...

The 5 product values that strengthen Zeenea’s team cohesion & customer experience

To remain competitive, organizations must make decisions quickly, as the slightest mistake can lead to a waste of ...

Zeenea is now SOC 2 Type II compliant

Faced with the increase in cyber threats, organizations endure a slew of customer requests for security assurance. ...

Interview with Ruben Marco Ganzaroli – CDO at Autostrade per l’Italia

We are pleased to have been selected by Autostrade per l'Italia - a European leader among concessionaires for the ...

What makes a data catalog “smart”? #5 – User Experience

A data catalog harnesses enormous amounts of very diverse information - and its volume will grow ...

What makes a data catalog “smart”? #4 – The search engine

A data catalog harnesses enormous amounts of very diverse information - and its volume will grow ...

What makes a data catalog “smart”? #3 – Metadata Management

A data catalog harnesses enormous amounts of very diverse information - and its volume will grow ...

What makes a data catalog “smart”? #2 – The Data Inventory

A data catalog harnesses enormous amounts of very diverse information - and its volume will grow ...

What makes a data catalog “smart”? #1 – Metamodeling

A data catalog harnesses enormous amounts of very diverse information - and its volume will grow ...

The business glossary: a productivity lever for a data catalog

An organization needs to handle vast volumes of technical assets that often carry a lot of duplicate information ...

Zeenea voted best data catalog on the market for data democratization – BARC

On October 20, BARC hosted an interactive webinar with three historical data catalog vendors - Alation, ...

How to build an efficient permission management system for a data catalog

An organization’s data catalog enhances all available data assets by relying on two types of information - on the ...

Exploiting the value of Data Lineage in the organization: A user-centric approach

In our previous article, we broke down Data Lineage by presenting the different lineage typologies (physical ...

Breaking down Data Lineage: typologies and granularity

As a concept, Data Lineage seems universal: whatever the sector of activity, any stakeholder in a data-driven ...

How pivoting to a SaaS model allowed 320 production releases in 6 months

After starting out as an on-premise data catalog solution, Zeenea made the decision to switch to a fully SaaS ...

What is Data Lineage?

In order to access and exploit your data assets on a regular basis, your organization will need to know ...

The Data catalog: an essential solution for metadata management

Your company produces or uses more and more data? To better classify, manage, and give meaning to your data, ...

Data Curation: essential for enhancing your data assets

Having large volumes of data isn’t enough: it’s what you make of it that counts! To make the most out of your ...

The 7 lies of Data Catalog Providers – #7 – A Data Catalog is complex…but isn’t complicated!

The Data Catalog market has developed rapidly, and it is now deemed essential when deploying a data-driven ...

The 7 lies of Data Catalog Providers – #6 A Data Catalog must rely on automation!

The Data Catalog market has developed rapidly, and it is now deemed essential when deploying a data-driven ...

Pricing your Enterprise Data Catalog: What should it really cost?

For the last couple of years, the Zeenea international sales team has been in contact with prospective clients the ...

The 7 lies of Data Catalog Providers – #5 A Data Catalog is not a Business Modeling Solution!

The Data Catalog market has developed rapidly, and it is now deemed essential when deploying a data-driven ...

The 7 lies of Data Catalog Providers – #4 A Data Catalog is not a Query Solution!

The Data Catalog market has developed rapidly, and it is now deemed essential when deploying a data-driven ...

What is Data Mesh?

In this new era of information, new terms are used in organizations working with data: Data Management Platform, ...

The 7 lies of Data Catalog Providers – #3 A Data Catalog is not a Compliance Solution!

The Data Catalog market has developed rapidly, and it is now deemed essential when deploying a data-driven ...

The 7 lies of Data Catalog Providers – #2 A Data Catalog is NOT a Data Quality Management Solution

The Data Catalog market has developed rapidly, and it is now deemed essential when deploying a data-driven ...

The 7 lies of Data Catalog Providers – #1 A Data Catalog is NOT a Data Governance Solution

The Data Catalog market has developed rapidly, and it is now deemed essential when deploying a data-driven ...

Data mapping, the key to regulatory compliance

Regardless of the business sector, data management is a key strategic asset for companies. This information is key ...

IoT in manufacturing: why your enterprise needs a data catalog

Digital transformation has become a priority in organizations' business strategies and manufacturing industries ...

Machine Learning Data Catalogs: good but not good enough!

How can you benefit from a Machine Learning Data Catalog?

You can use Machine Learning Data Catalogs (MLDCs) to ...

What is a knowledge graph and how can it empower data catalog capabilities?

Knowledge graphs have been interacting with us for quite some time. Whether it be through personalized shopping ...

A smart data catalog, a must-have for data leaders

The term "smart data catalog" has become a buzzword over the past few months. However, when referring to something ...

Data science: accelerate your data lake initiatives with metadata

Data lakes offer an unlimited storage for data and present lots of potential benefits for data scientists in the ...

DataOps: How data catalogs enable better data discovery in a Big Data project

In today’s world, Big Data environments are more and more complex and difficult to manage. We believe that Big ...

How you’re going to fail your data catalog project (or not…)

There are many solutions on the data catalog market that offer an overview of all enterprise data all thanks to ...

How does Zeenea Data Catalog empower your data teams?

Data has become one of the main drivers for innovation for many sectors.And as data continues to rapidly ...

How to evaluate your future Data Catalog?

The explosion of data sources in organizations, the heterogeneity of data or even the new demands related to data ...

What is the difference between a data dictionary and a business glossary?

In metadata management, we often talk about data dictionaries and business glossaries. Although they might ...

Data Revolutions: Towards a Business Vision of Data

The use of massive data by the internet giants in the 2000s was a wake-up call for enterprises: Big Data is a ...

What is a Chief Data Officer

According to a Gartner study presented at the Data & Analytics conference in London 2019, 90% of large ...

How Artificial Intelligence enhances data catalogs

Can machines think? We are talking about artificial intelligence, “the biggest myth of our time”!A simple ...

Data catalog: a self-service data platform

A data catalog is a portal that brings metadata on collected data sets together by the enterprise. This ...

What are the different types of metadata?

Dealing with large volumes of data is essential to any organization's success. But knowing what kind of data it ...

What is a Data Steward?

Data stewards are the first point of reference for data and serve as an entry point for data access. They have ...

What is a Data Catalog?

It is no secret that the enormous volumes of information that companies generate require the right tools ...

Data mapping: The challenges in an organization

The arrival of Big Data did not simplify how enterprises work with data. The volume, the variety, and the ...

How to map your information system’s data?

Data lineage is defined as the life cycle of data: its origin, movements, and impacts over time. It offers ...

Data lineage in a big data environment

Data lineage is defined as a type of data life cycle. It is a detailed representation of any data over ...

No results found.

Let's get started

Make data meaningful & discoverable for your teams

Los geht’s!

Geben Sie Ihren Daten einen Sinn

Mehr erfahren >

Démarrez maintenant

Donnez du sens à votre patrimoine de données

En savoir plus

Produkt

Funktionalitäten

Use Cases

© 2026 Zeenea - All Rights Reserved

Product

Capabilities

Use Cases

© 2026 Zeenea - All Rights Reserved

Produit

Capacités

Cas d'usage

© 2026 Zeenea - Tous droits réservés.