Harnessing the Power of AI in Data Cataloging

In today’s era of expansive data volumes, AI stands at the forefront of revolutionizing how organizations manage and extract value from diverse data sources. Effective data management becomes paramount as businesses grapple with the challenge of navigating vast amounts of information. At the heart of these strategies lies data cataloging—an essential tool that has evolved significantly with the integration of AI, with promises of efficiency, accuracy, and actionable insights. Let’s see how in this article.

The benefits of AI in data cataloging

AI revolutionizes data cataloging by automating and enhancing traditionally manual processes, thereby accelerating efficiency and improving data accuracy across various functions:

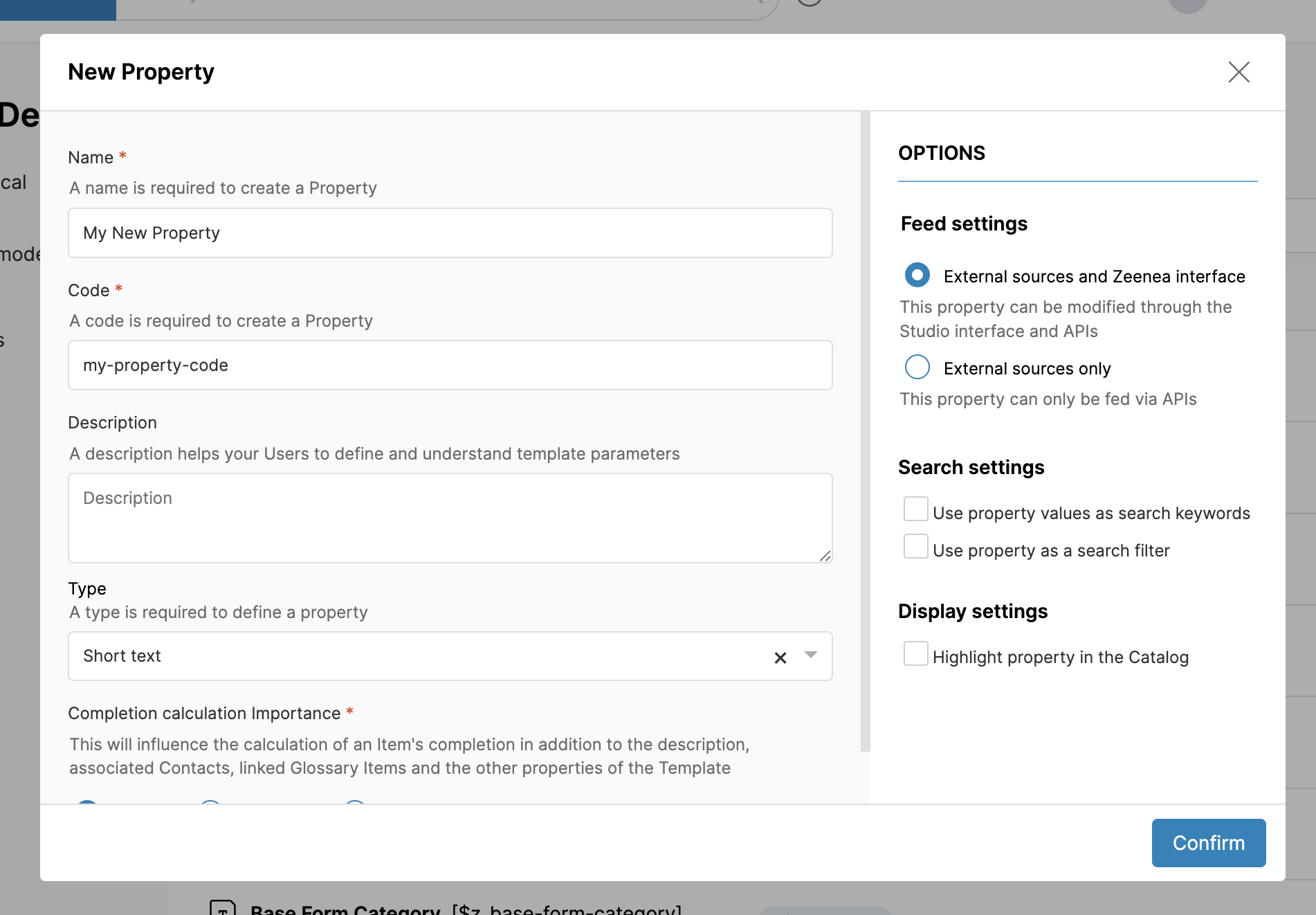

Automated metadata generation

AI algorithms autonomously generate metadata by analyzing and interpreting data assets. This includes identifying data types, relationships, and usage patterns. Machine learning models infer implicit metadata, ensuring comprehensive catalog coverage. Automated metadata generation reduces the burden on data stewards and ensures consistency and completeness in catalog entries. This capability is precious in environments with rapidly expanding data volumes where manual metadata creation could be more practical.

Simplified data classification and tagging

AI facilitates precise data classification and tagging using natural language processing (NLP) techniques. By understanding contextual nuances and semantics, AI enhances categorization accuracy, which is particularly beneficial for unstructured data formats such as text and multimedia. Advanced AI models can learn from historical tagging decisions and user feedback to improve classification accuracy. This capability simplifies data discovery processes and enhances data governance by consistently and correctly categorizing data.

Enhanced Search capabilities

AI-powered data catalogs feature advanced search capabilities that enable swift and targeted data retrieval. AI recommends relevant data assets and related information by understanding user queries and intent. Through techniques such as relevance scoring and query understanding, AI ensures that users can quickly locate the most pertinent data for their needs, thereby accelerating insight generation and reducing time spent on data discovery tasks.

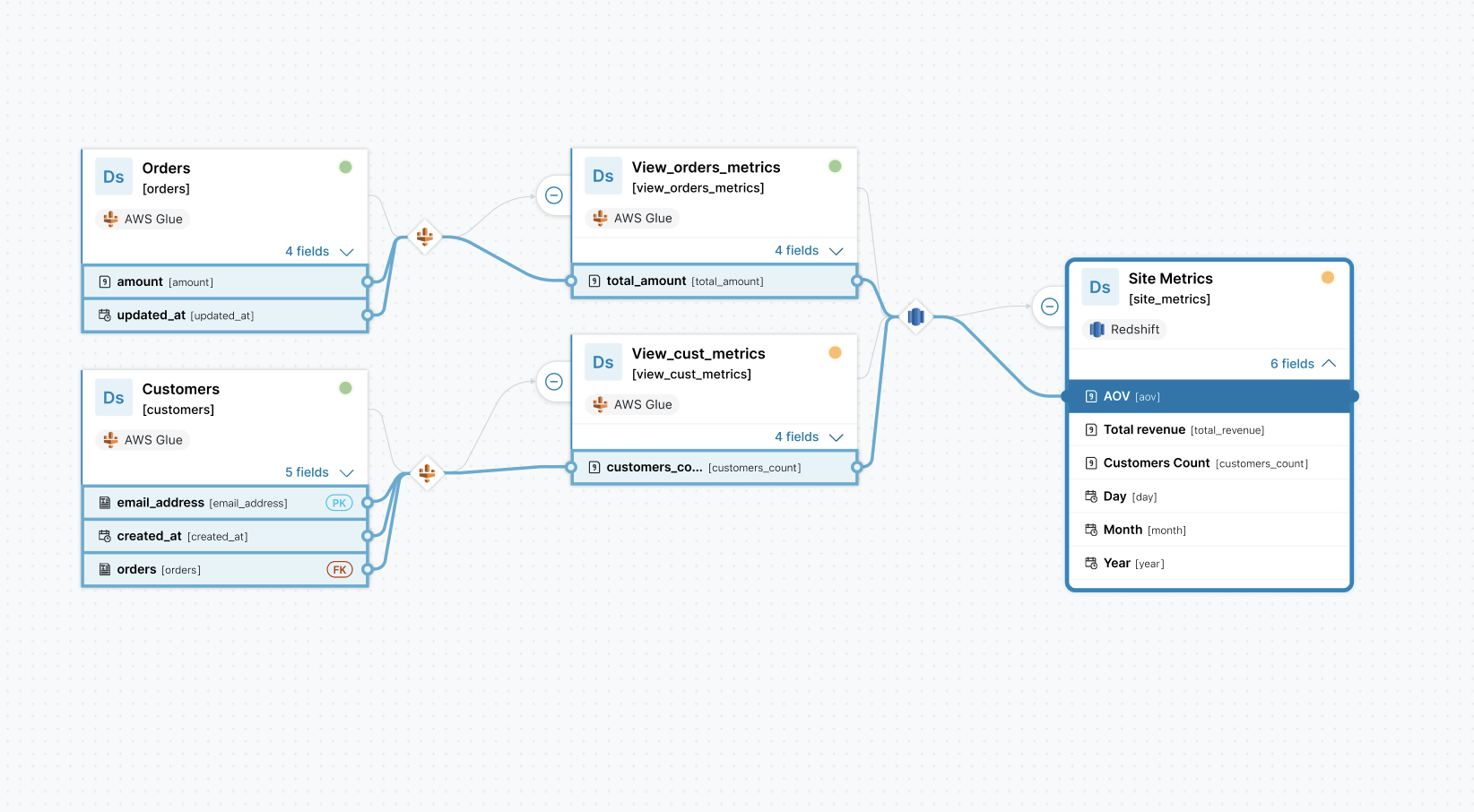

Robust Data lineage and governance

AI is crucial in tracking data lineage by tracing its origins, transformations, and usage history. This capability ensures robust data governance and compliance with regulatory standards. Real-time lineage updates provide a transparent view of data provenance, enabling organizations to maintain data integrity and traceability throughout its lifecycle. AI-driven lineage tracking is essential in environments where data flows through complex pipelines and undergoes multiple transformations, ensuring all data usage is documented and auditable.

Intelligent Recommendations

AI-driven recommendations empower users by suggesting optimal data sources for analyses and identifying potential data quality issues. These insights derive from historical data usage patterns. Machine learning algorithms analyze past user behaviors and data access patterns to recommend datasets that are likely to be relevant or valuable for specific analytical tasks. By proactively guiding users toward high-quality data and minimizing the risk of using outdated or inaccurate information, AI enhances the overall effectiveness of data-driven operations.

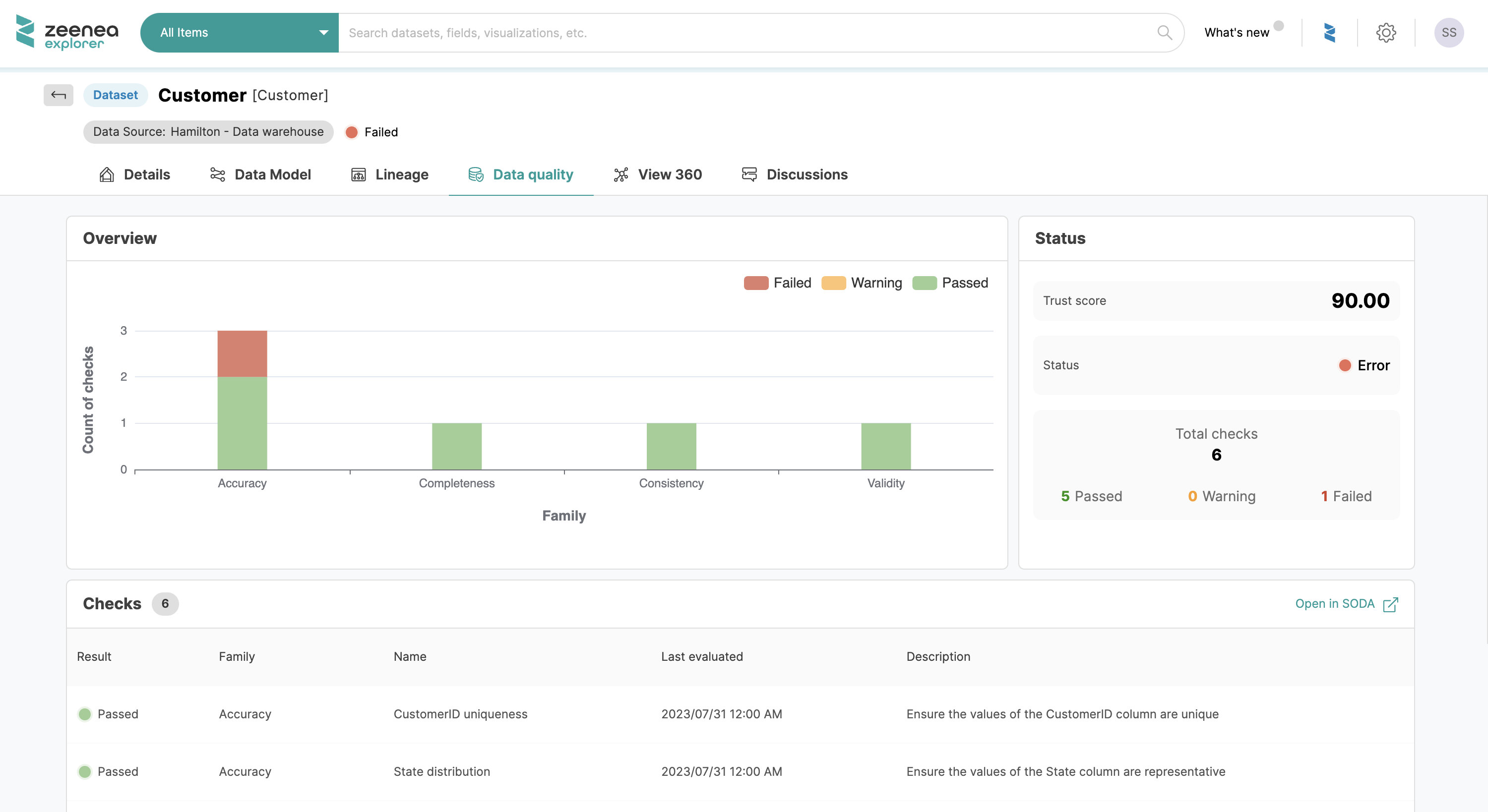

Anomaly Detection

AI-powered continuous monitoring detects anomalies indicative of data quality issues or security threats. Early anomaly detection facilitates timely corrective actions, safeguarding data integrity and reliability. AI-powered anomaly detection algorithms utilize statistical analysis and machine learning techniques to identify deviations from expected data patterns.

This capability is critical in detecting data breaches, erroneous data entries, or system failures that could compromise data quality or pose security risks. By alerting data stewards to potential issues in real-time, AI enables proactive management of data anomalies, thereby mitigating risks and ensuring data consistency and reliability.

The challenges and considerations of AI in data cataloging

Despite its advantages, AI-enhanced data cataloging presents challenges requiring careful consideration and mitigation strategies.

Data Privacy and Security

Protecting sensitive information requires robust security measures and compliance with data protection regulations such as GDPR. AI systems must ensure data anonymization, encryption, and access control to safeguard against unauthorized access or data breaches.

Scalability

Implementing AI at scale demands substantial computational resources and scalable infrastructure capable of handling large volumes of data. Organizations must invest in robust IT frameworks and cloud-based solutions to support AI-driven data cataloging initiatives effectively.

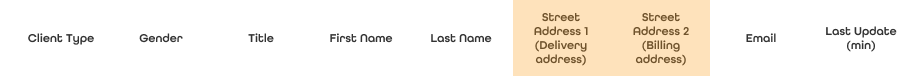

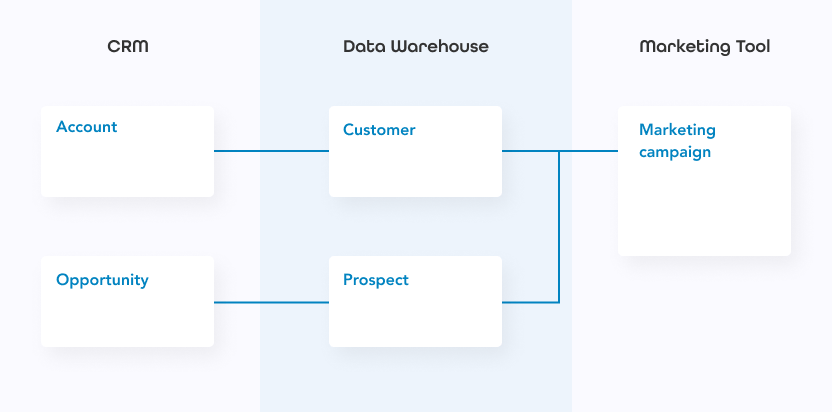

Data Integration

Harmonizing data from disparate sources into a cohesive catalog remains complex, necessitating robust integration frameworks and data governance practices. AI can facilitate data integration by automating data mapping and transformation processes. However, organizations must ensure compatibility and consistency across heterogeneous data sources.

In conclusion, AI’s integration into data cataloging represents a transformative leap in data management, significantly enhancing efficiency and accuracy. AI automates critical processes and provides intelligent insights to empower organizations to exploit their data assets fully in their data catalog. Furthermore, overcoming data privacy and security challenges is essential for successfully integrating AI. As AI technology advances, its role in data cataloging will increasingly drive innovation and strategic decision-making across industries.